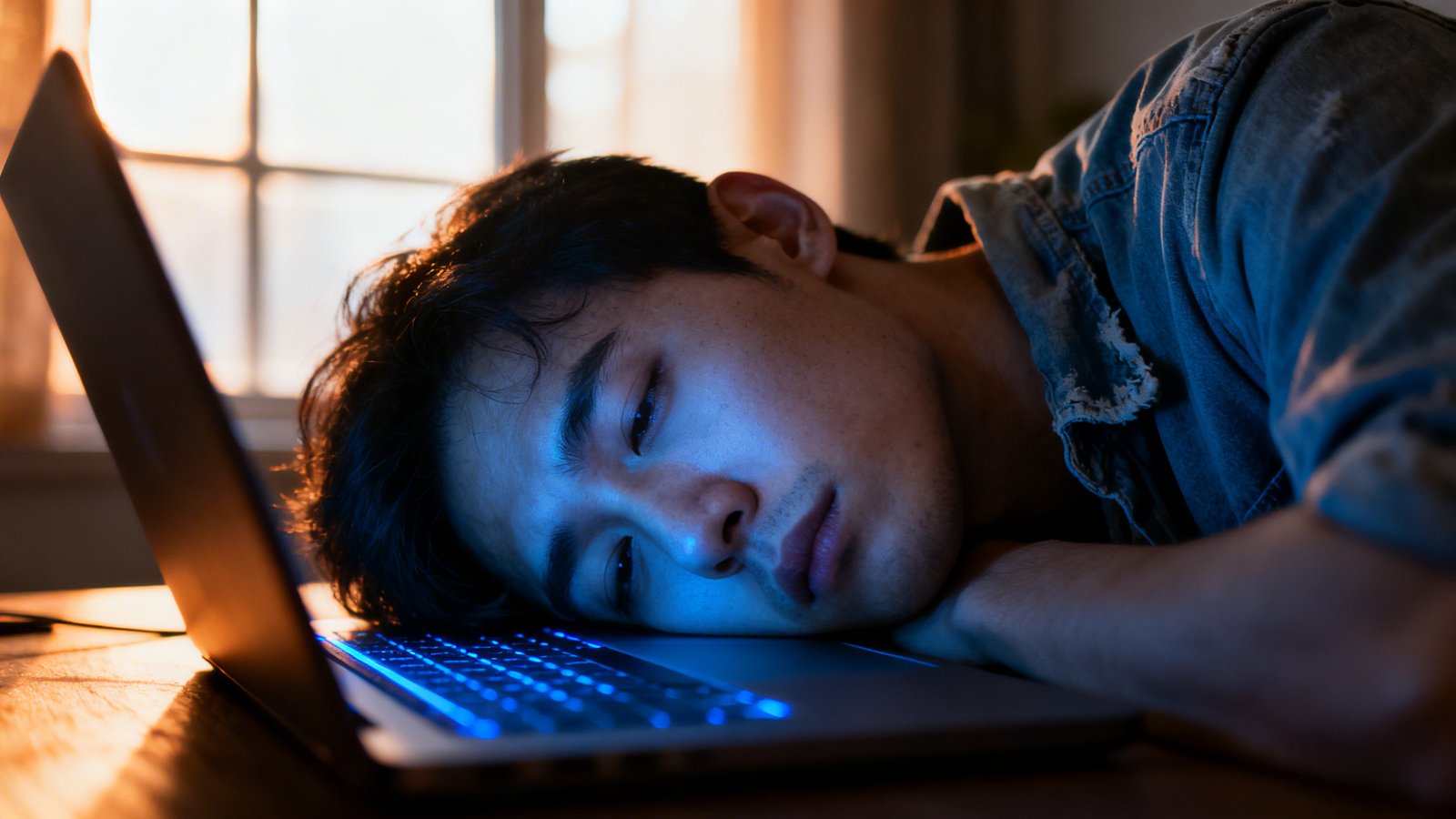

Okay, so here’s a thing I’ve noticed, and if you’re like me – someone who actually pays attention to what’s going on with this whole AI explosion – it probably hit you too, like a ton of bricks. We’re talking about burnout. But not just any burnout. The kind of burnout that’s hitting the very people who were singing AI’s praises the loudest. The early adopters, the ‘prompt engineers,’ the tech bros (and sisters, let’s be fair) who swore this was gonna change everything, make us all super-productive, give us back our weekends. Yeah, about that…

The ‘AI Will Save Us All’ Hangover

I mean, remember just six, maybe eight months ago? Everyone was buzzing. Every LinkedIn feed, every tech conference, every damn water cooler conversation was about how AI was going to be the magic bullet. It was going to write our emails, draft our code, design our presentations, even tell us what to eat for dinner. And the early adopters, man, they were on fire. They were experimenting, sharing tips, showing off their crazy AI-generated art or their perfectly structured business plans churned out in seconds. They were basically evangelists. And for a minute there, it felt like maybe they were right. Maybe this was the thing that would free us from the drudgery. Maybe we could all just kick back and let the robots do the boring stuff.

But then, something started to shift. Slowly at first. Little whispers on Reddit (yeah, I know, I spend too much time there, but sometimes you find the real pulse, you know?). People started saying things like, “I’m spending more time refining prompts than actually doing the work.” Or, “It’s like I’ve become a glorified spell-checker for a really fast, really confident idiot.” And then the burnout reports started coming in, not from the Luddites, not from the skeptics, but from the actual believers. The ones who spent hours tweaking parameters, learning the ins and outs of every new model. It’s like they ran a marathon, but the finish line just kept moving. And moving. And moving.

The Invisible Cognitive Load

Here’s the thing about this kind of burnout-it’s not physical. Nobody’s breaking their back lifting boxes because of AI. This is a mental grind. A cognitive drain. You’re constantly evaluating, refining, correcting, guiding an intelligence that’s both brilliant and utterly devoid of common sense. It’s like trying to teach a super-genius toddler to build a rocket-they have all the raw processing power, but you still gotta hold their hand and tell them not to eat the wires. That’s exhausting, right? It’s not just prompting, it’s managing a highly capable, yet inherently alien, co-worker. And who signed up for that, exactly?

So, Is ‘Working Smarter’ Just More Work?

This whole thing makes me wonder, what did we actually expect? Was it really going to be a magical genie that just granted wishes without any effort on our part? I mean, come on. Every new technology, from the printing press to the internet, has promised to make our lives easier, and in some ways, it has. But it’s also always introduced new complexities, new demands. The internet gave us instant information, but it also gave us 24/7 connectivity expectations and an endless scroll of doom. This feels like that, but cranked up to eleven.

“It’s like we bought a fancy new kitchen appliance that promises gourmet meals, but then we realized we still have to buy the ingredients, follow a recipe, and clean up the damn mess. And sometimes, the appliance just makes a really confident, very articulate pile of garbage.”

We’re sold this idea of “working smarter, not harder.” And AI, on the surface, seems like the ultimate tool for that. But if “smarter” means “spending half your day arguing with a chatbot to get it to understand basic human intent,” then I’m not sure we’re actually smarter. We’re just… different. And arguably, more stressed. It’s the pressure, you know? The pressure to constantly optimize, to produce more, faster, with fewer resources, all while wrestling with a tool that’s powerful but still needs a human in the loop to stop it from going completely off the rails. That’s a new kind of hell, if you ask me.

The Real Problem Isn’t The AI, It’s Us (Mostly)

Look, I’m not an AI hater. Not entirely. I think it’s got some incredible potential. But the problem, as I see it, isn’t the AI itself, it’s how we’re being pushed to use it, and frankly, how we’re choosing to use it. We’re trying to shove every single task through an AI lens, whether it makes sense or not. We’re treating it like a replacement for human thought, not an augmentation. And that’s where the wheels come off.

Think about it. The people getting burned out? They’re the ones trying to bend the AI to their will, to make it perfectly mimic human output, to get it to do 99% of the job. And that last 1%? That’s where all the effort, all the frustration, all the soul-sucking refinement comes in. It’s the uncanny valley of productivity. It looks almost right, but it’s just off enough to drive you absolutely bonkers.

And then there’s the expectation from on high. Management sees AI and thinks, “Great! Now everyone can do twice the work for the same pay!” They don’t see the hours spent wrestling with prompts, the mental fatigue of fact-checking every AI-generated paragraph, the sheer effort it takes to make something produced by a machine sound like it came from a human being with a pulse and a brain. They just see the promise of increased output. And that gap-the gap between what AI can do and what we force it to do-that’s where the burnout festers.

What This Actually Means

So, where does this leave us? I think it’s a huge wake-up call. It tells us that technology, no matter how advanced, isn’t a silver bullet for human problems. It’s a tool. And like any tool, it can be used wisely or unwisely. Right now, it feels like a lot of us are using a very powerful hammer to try and put in a very delicate screw, and we’re just smashing our thumbs in the process.

We need to figure out where AI truly shines and where it’s just adding more friction. It’s probably not in replacing nuanced human creativity or critical thinking entirely. It’s probably better at the grunt work, the data analysis, the pattern recognition. The stuff that’s genuinely boring and repetitive for humans. But if we try to make it do everything, we’re just going to end up with a lot of very stressed-out, very exhausted humans who feel like they’re babysitting a supercomputer. And honestly, who wants that? Not me, that’s for sure. My brain already feels like scrambled eggs most days, and I don’t need a robot adding more pepper to the pan. We gotta learn to work with it, not just be its reluctant editor. Or we’re all gonna be toast.